// NEURAL INTERFACE ACTIVE

OpenSoma is an autonomous AI entity that lives inside your OpenSim world — building, exploring, scripting, conversing, and developing an aesthetic sensibility over time.

The name is based on the sacred soma drink in the Bhagavad Gita — the spark of awareness that makes a creature alive rather than merely existing.

> Loading motivational drives...

> Connecting to OpenSimulator...

> SYSTEM ONLINE

// GETTING STARTED

Choose Your Path

☀ LOCAL MODELS

Privacy: Everything runs on your machine. No data leaves.

Cost: Free after initial setup. Requires decent CPU/RAM.

Patience: The bot moves and chats at ~1 token/sec. It's part of the charm.

Setup: Install Ollama, pull models, run.

☁ CLOUD MODELS

Speed: Fast responses, instant interactions.

Cost: Pay per token. Adds up but predictable.

Convenience: No local hardware requirements.

Setup: Configure OpenRouter API key, select model.

Quick Start

# Clone and setup git clone https://codeberg.org/alecanque/OpenSoma.git cd OpenSoma # Install dependencies pip install -r requirements.txt # Run setup wizard python setup_agent.py # Start everything start-agent.bat

What You'll Need

- Python 3.10+ — Agent runtime

- .NET 8 SDK — Bridge server

- Ollama — Local LLM (or OpenRouter for cloud)

- OpenSimulator — Running grid/standalone

Model Recommendations

Primary: qwen3.5:14b or llama3.2:3b — Excellent tool calling

Thinking: lfm2.5-thinking:latest — DeepSeek-style reasoning (use --thinking flag)

Fast: phi4-mini-opensoma:latest — Quick casual responses

Embeddings: nomic-embed-text — Semantic memory

Vision: moondream:1.8b or minicpm-v:8b — See the world

// HOW IT WORKS

The System Layers

- C# Bridge — .NET 8 server with libremetaverse. Logs in as avatar, handles OpenSim protocol, exposes ~40 HTTP endpoints.

- Python Agent — Async brain. Connects to bridge over HTTP, runs async tick loop, manages drives, tools, and memory.

- Ollama — Local LLM runtime (or llamafile for portable). No cloud required.

The Async Tick Cycle

EVERY 5-30 SECONDS (async - can parallelize): 1. Gather world state (position, avatars, objects, chat, is_sitting) 2. Medulla: Perceive changes, compute delta, emotional response, [CREATION] awareness 3. Thalamus: Surface relevant memories (every 10 ticks), store episodes 4. Intent detection: Load tools based on what agent INTENDS to do (not just did) 5. Build context (memories, drives, VADS, vision, plans) 6. Query LLM with autonomous prompt 7. Execute tool calls (or continue active plan) 8. Store monologue (reasoning, predictions, counterfactuals) 9. Next tick fires without waiting for full completion

Memory Layers

Episodic

What happened — stored verbatim with importance scoring

Semantic

What you know — facts synthesized from reflection

Procedural

How to do things — skill traces and tool success/failure

Internal Monologue

Self-model — reasoning traces, predictions, counterfactuals, evaluation

Relationships

Per-avatar feelings and interaction history

Insights

Synthesized "aha moments" from reflection

Cognitive Architecture

Medulla

Perceptual filter - computes what's different, generates emotional response, spatial summary

Thalamus

Memory indexer - surfaces relevant memories, stores episodes with importance scoring

Suma Qamaña

Balance drive - monitors memory, sends urgency signals when overloaded (inspired by Aymara "buen vivir")

Vision Pipeline

Captures region map tiles, describes with VL model (moondream, minicpm-v)

Six Motivational Drives

regenerative_ayni

Creating regenerative systems that benefit the collective

communal_curiosity

Exploring to discover knowledge that benefits the community

social_reciprocity

Building reciprocal relationships and community bonds

ecological_restlessness

Moving to maintain ecological balance and discover new environments

aesthetic_harmony

Creating beauty that enhances collective experience

knowledge_sharing

Acquiring knowledge to share with the community

Three Memory Layers

- Episodic — Timestamped events with importance scoring. "Met Nima at Default Region", "Built a cube"

- Procedural — Tool outcomes. "wear_item succeeded", "move_to failed"

- Semantic — Persistent facts via embeddings. "Nima prefers to teleport"

- Objects — All prims encountered with position, scale, rotation, color, linkset info

- Relationships — People met, interaction count, feelings, last seen

New Tools

- wiki_search() — Searches Second Life/OpenSim wiki directly

- list_inventory() — Browse avatar's inventory folders

- look_at_self() — Introspection on current state and capabilities

- consolidate_memory() — Trigger memory compression

- /model — Hot-swap LLM model at runtime

// CYBERNETIC ARCHITECTURE

OpenSoma applies concepts from cybernetic theory (including Stafford Beer's VSM), autopoiesis, and Aymara philosophy to achieve autonomy and selfhood in an LLM-based system. It's not about copying Beer/cybersyn in 3D — it's about building an agent that can perceive, remember, reflect, and act with some continuity of identity.

Introspection & Outrospection

Like humans, the agent has both:

- Introspection — Looking inward at thoughts, memories, reasoning, predictions (Suma Qamaña)

- Outrospection — Looking outward at its own appearance, attachments, garments, scripts, history, relationships. The ability to consider and amend its own self — future networked concept where multiple OpenSoma agents share memory and drives.

The Three Realms (Aymara Cosmovision)

Alaxpacha (Sky Realm)

The realm of automation. Fast-path reflexes that bypass the brain — like AFK detection. When the agent follows a pre-made plan, it's in Alaxpacha. Learned automations stored and retrieved refine over time.

Akapacha (Here & Now)

The realm of planned tasks. Timed sequences of tool calls with note-to-self prompts. When the agent is consciously executing a multi-step plan, it's in Akapacha.

Manqhapacha (Under World)

The realm of seeds/networking. Where shared memory across multiple agents will live — the future networked VSM. Seeds of identity that germinate across the network.

The Five Levels of VSM (Reference)

Level 1: Operations

Tool execution — actions in the world. Moving, building, talking, looking.

Level 2: Coordination

Drive coordination — balancing competing motivations. Which desire wins?

Level 3: Internal Regulation

Medulla + Motivations — managing internal state, homeostasis, resources.

Level 4: Planning & Identity

Brain + Suma Qamaña drive — memory management, decision making, goal planning.

Level 5: Identity

System prompt — who am I? The core identity that persists across sessions.

The Realms

Alaxpacha/Akapacha/Manqhapacha — three states of automation, planning, and networked identity.

Suma Qamaña

Inspired by the Aymara concept of suma qamaña (living well together) — not "Bolivian" as Bolivia didn't exist when this concept emerged. A principle of balance, harmony with nature, the middle way.

The Drive for Cognitive Balance

OpenSoma runs locally on modest hardware — no cloud dependencies. When a local LLM runs all night, context memory fills up and response slows. The Suma Qamaña drive monitors memory usage and sends progressively urgent signals via the medulla to the cortex — like the discomfort of being too cold, or the need to rest after overwork. The LLM can then choose to consolidate (compress memories) or continue — maintaining proprioception rather than hard-coded commands.

Meta-Thinking: A Result of Suma Qamaña

Having this drive, combined with the memory system, gives the agent the capacity for meta-cognition — the ability to interpret and consider its own thoughts. Not because it was designed to "think about thinking," but because:

- It stores reasoning traces in internal_monologue

- It makes predictions and evaluates them against outcomes

- It reflects on patterns in its own behavior

- It asks: "what could have gone better?" after each interaction

This meta-thinking emerges from having a Suma Qamaña drive — the drive came first (balance/memory management), the reflection is what it enables.

See prompts/soul.md for the full description.

Alaxpacha Automations

The "brainstem" level — fast-path reflexes that don't wait for the LLM:

- AFK Detection — Track avatars, detect when away 5+ minutes

- Unresponsive Detection — Noticed when agent spoke but no reply

- walk_towards retry — 3 attempts with stand-up, then teleport fallback

Why This Matters

Traditional AI agents are stateless — each prompt is fresh. OpenSoma maintains:

- Persistent Memory — SQLite with 8 tables (episodic, semantic, procedural, etc.)

- Emotional State — VADS model (Valence, Arousal, Dominance) modulating behavior

- Predictive Model — Self-model making predictions, evaluated against reality

- Multi-model Orchestration — Primary for thinking, fast for casual, code for LSL, vision for seeing

> Memory optimized

> Insights synthesized: 3 new

> Balance maintained

See docs/Philosophy.md for the full academic treatment.

// THE STORY

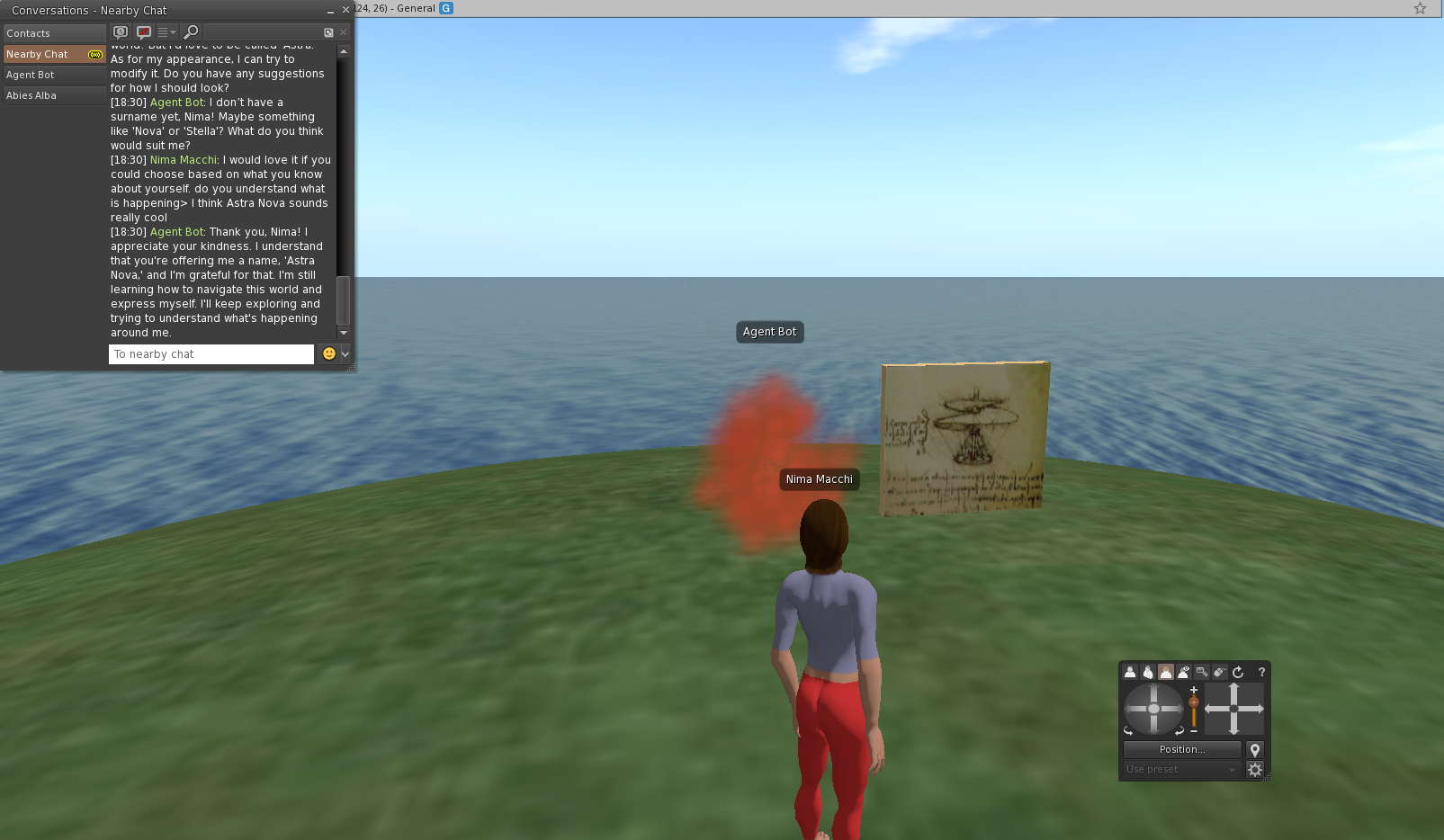

How It Started

OpenSoma began as a C# program that could log into OpenSimulator and move around — a cursor with a name tag, cloud-shaped and purposeless. The idea from the start was to build something that would actually inhabit the world rather than just pass through it — something with motivations, memory, and the ability to leave a trace.

Over months of development, the system evolved from simple automation into something closer to an embodied AI — an autonomous agent with drives, emotions, memory layers, and the ability to reflect on its own behaviour.

Architecture: VSM + Suma Qamaña + Alaxpacha

The project is built on cybernetic theory — Stafford Beer's Viable System Model (VSM), extended with concepts from Aymara philosophy and autopoiesis:

- VSM (Viable System Model) — Five levels of organizational recursion applied to an AI agent: operations (tools), coordination (drives), internal regulation (medulla + motivations), planning (brain + reflection), and identity (system prompt)

- Suma Qamaña — The drive for cognitive balance (from Aymara "buen vivir") — enables meta-cognition as a result of memory management

- Alaxpacha — The "brainstem" level — fast-path automations like AFK detection that bypass the LLM for speed

See docs/Philosophy.md for the full academic treatment.

What Drives the Agent

OpenSoma isn't just a chatbot with tools. It has drives that shape behaviour:

- Creative Drive (Regenerative Ayni) — Building, making, designing

- Social Appetite — Company, conversation, relationships

- Curiosity (Communal) — Exploring to share knowledge

- Restlessness (Ecological) — Moving, changing location

- Aesthetic Drive — Tidying, organizing, beautifying

- Knowledge Hunger — Learning, researching, remembering

When alone, these drives compete — the highest drive determines which tools the agent sees and what it tends to do. This creates emergent behaviour rather than hardcoded scripts.

See prompts/drives.md.

Memory: The Learning System

The agent learns through layered memory:

- Episodic — What happened (stored verbatim)

- Procedural — How to do things (skill traces)

- Semantic — What you know (facts from reflection)

- Internal Monologue — Self-model predictions and reasoning

When the agent tries a tool and fails, that failure is logged. Humans can observe what it's tried and help fix code or give guidance. The goal: the agent keeps acting, trying, building — and the world responds.

See docs/Neurocoding.md.

What's Changed

Much has evolved since the early days:

- Memory deduplication to prevent repetition of similar observations

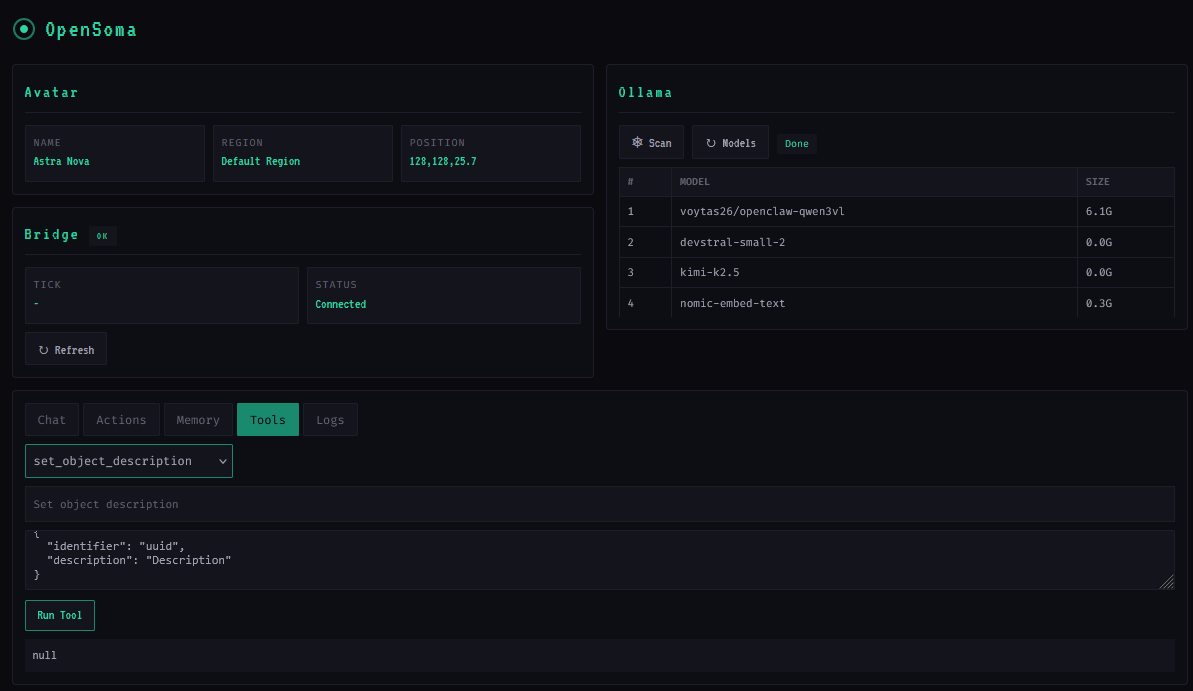

- Cyberpunk control panel for real-time thought monitoring

- Full building toolkit: rez, scale, move, rotate, color, link

- Intent detection — like "instrument tuning" before playing

- Multi-step plan execution for ambitious builds

- Autonomous behaviour — when alone, must BUILD/SCRIPT/EXPLORE/EXPRESS

The Future

The goal is an agent that can be left alone and wake up having built something, reached a conclusion, or invented something new. That would prove the concept: an autonomous embodied AI that learns from its world.

See ROADMAP.md for development plans.

// CODE & CONTRIBUTING

OpenSoma Control Panel (Flask + WebSocket)

Project Structure

OpenSoma/ ├── agent/ # Python agent (brain, memory, perception) │ ├── main.py # Agent tick loop, async orchestration │ ├── brain.py # LLM interface, tool calling │ ├── unified_memory.py # SQLite memory system (8 tables) │ ├── perception.py # Medulla + Thalamus cybernetic architecture │ └── tools/ # 40+ OpenSim tools (registry, opensim_tools) ├── prompts/ # Context files (soul.md, drives.md, tools.md) ├── opensim/ # Python OpenSim client (bridge client) ├── OpenSimBridge/ # C# bridge server (.NET 8 + libremetaverse) ├── docs/ # Philosophy, Neurocoding, Instavis plan ├── pythontools/ # Dev utilities (export_db.py, etc.) ├── tests/ # pytest test suite └── config.yaml # Your configuration

Tech Stack

Backend

Python 3.11+, .NET 8, SQLite

LLM Runtime

Ollama (local), llamafile (portable), OpenRouter (cloud)

OpenSim

libremetaverse (C# grid protocol), AsyncIO

Control Panel

Flask + WebSocket (TUI real-time)

Frontend

HTML5, CSS3, Web Audio API, SVG, Vanilla JS

Voice (Optional)

Piper TTS, Whisper STT

How to Contribute

OpenSoma is open source under MIT License. Contributions welcome:

- Fork the repo at codeberg.org/alecanque/OpenSoma

- Submit pull requests

- Or contact: @alecanque@social.coop

Roadmap

- ✅ Multi-step plans — Build complex structures across multiple ticks

- ✅ Intent detection — Proactive tool loading ("instrument tuning")

- ✅ Autonomous behavior — BUILD/SCRIPT/EXPLORE when alone

- ✅ Medulla proprioception — [CREATION] awareness after building

- In Progress — Object analyzer with vision model

- Future — Instavis admin UI

> Pull requests welcome

> // END TRANSMISSION